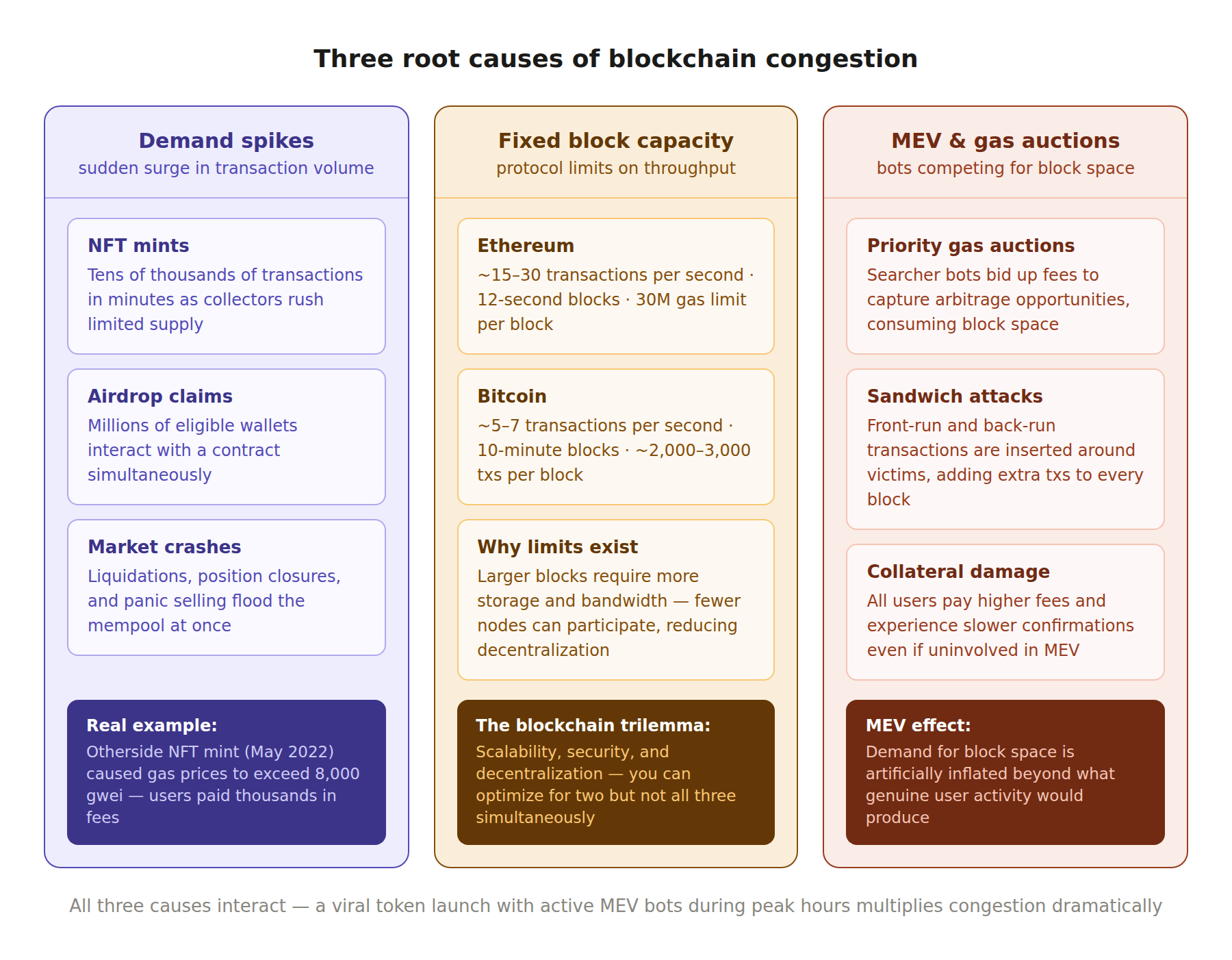

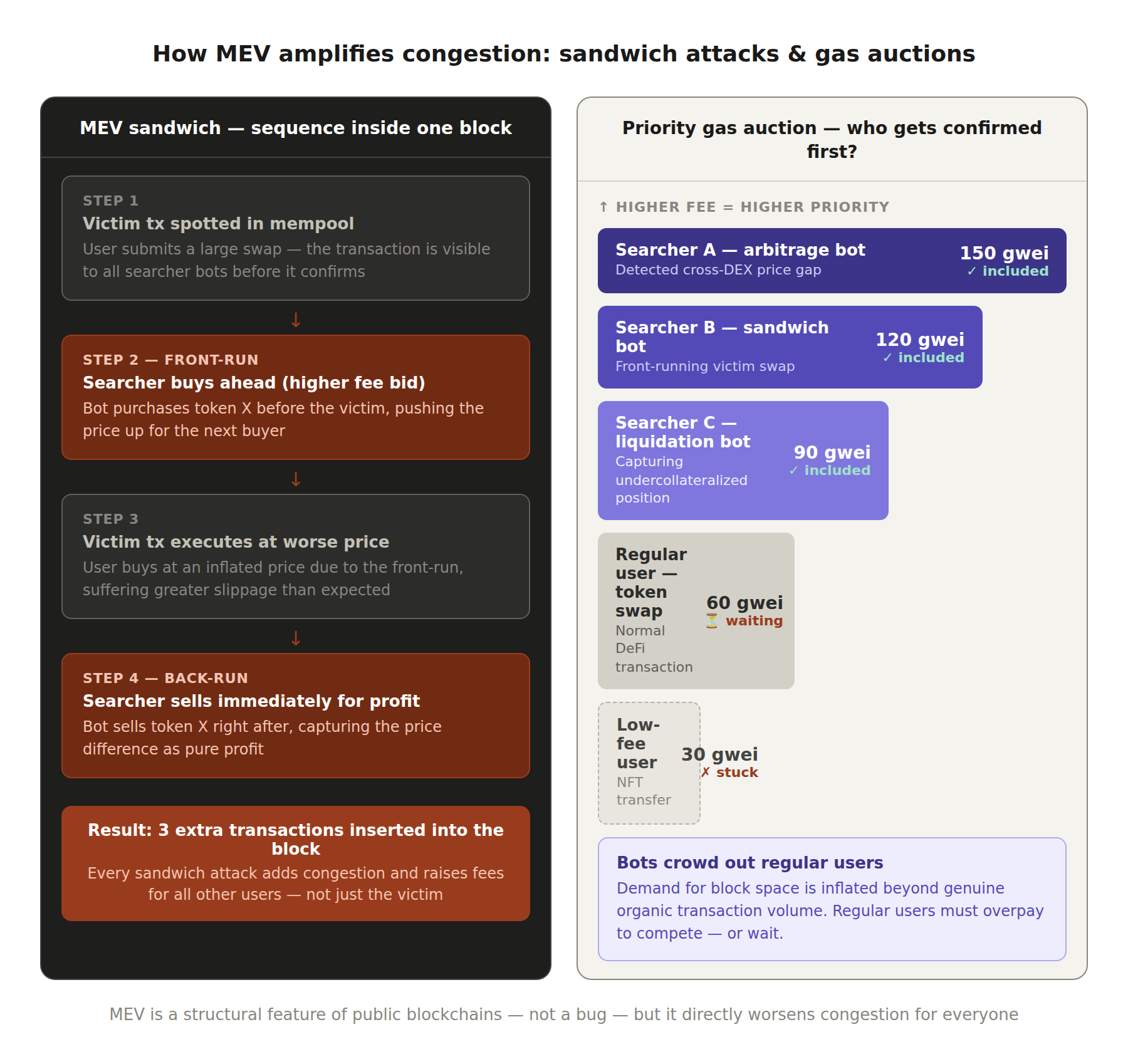

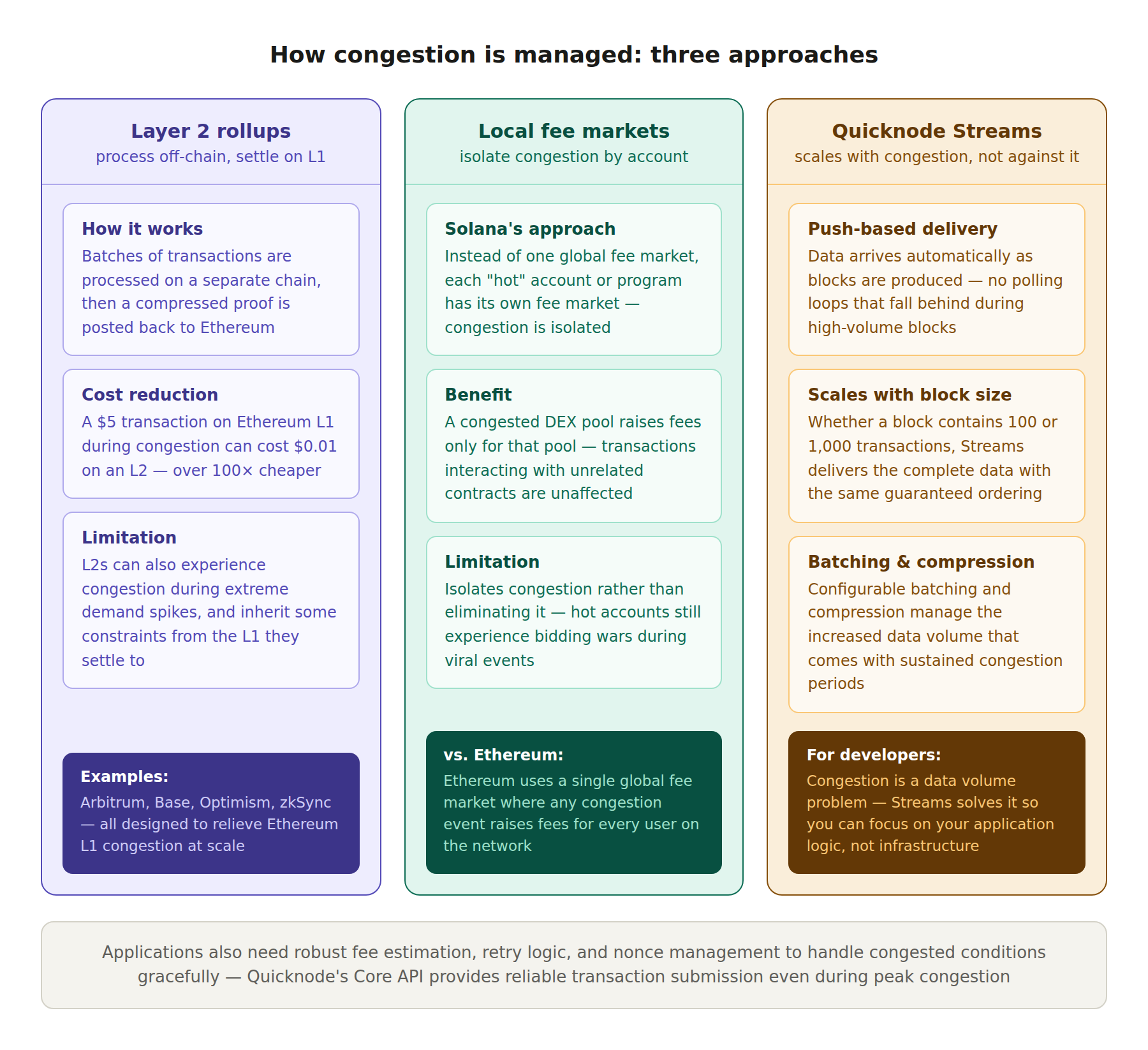

TL;DR: Blockchain congestion occurs when more transactions are submitted to the network than can fit in the next block. Every blockchain has a maximum amount of computation or data it can process per block, and when demand exceeds that limit, a backlog forms in the mempool (the waiting area for unconfirmed transactions). Congestion leads to higher transaction fees (as users compete for limited block space), longer confirmation times, and degraded user experience. The root causes include fixed block capacity, demand spikes from popular events, fee market dynamics, and the fundamental design tradeoffs that prioritize security and decentralization over raw throughput.

The Simple Explanation

A blockchain processes transactions in batches called blocks. Each block has a capacity limit. On Ethereum, this limit is expressed as a gas limit (currently around 30 million gas per block). On Bitcoin, it is a weight limit (4 million weight units per block). On Solana, it is a combination of compute units and account locks per slot. Regardless of the specific mechanism, every chain has a ceiling on how much work it can do per block.

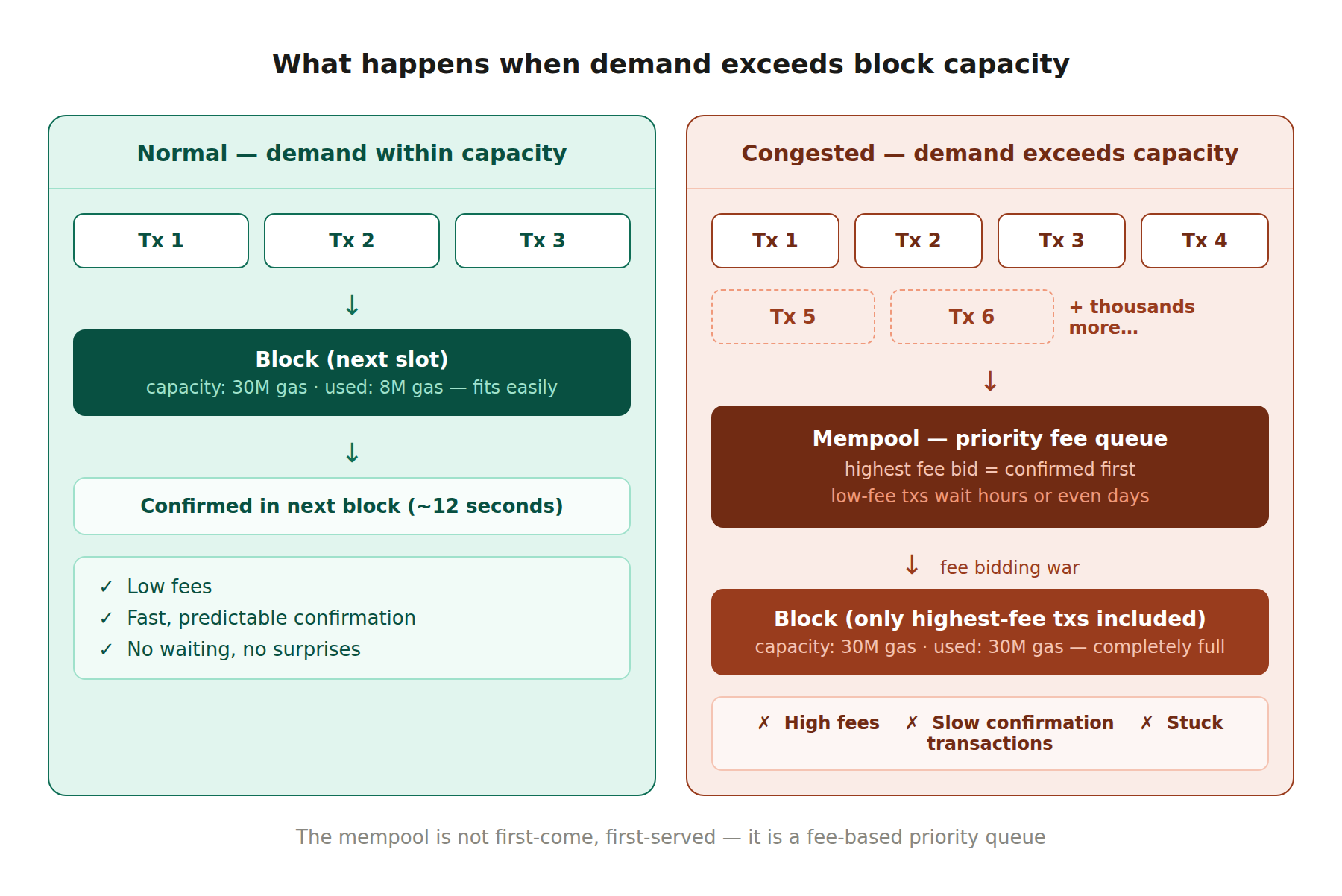

When the number of transactions being submitted to the network is lower than the block capacity, everything runs smoothly. Transactions are included in the next block, fees are low, and confirmation is fast. When the number of transactions exceeds the block capacity, a queue forms. This queue is the mempool, a holding area maintained by each node where valid but unconfirmed transactions wait for inclusion in a future block.

The mempool is not first-come, first-served. It is a fee-based priority queue. Block producers (miners or validators) select the transactions that pay the highest fees because that maximizes their revenue. When the mempool is full, users who want their transactions confirmed quickly must outbid everyone else by offering a higher fee. This bidding war is what causes gas prices to spike during congestion. Users who are unwilling or unable to pay the elevated fees have their transactions stuck in the mempool for minutes, hours, or sometimes days.

Demand Spikes

The most visible cause of congestion is a sudden surge in demand for block space. These spikes are often triggered by specific events. A popular NFT mint can generate tens of thousands of transactions within minutes as collectors rush to secure limited-edition tokens. A DeFi protocol launch or airdrop claim attracts a flood of users interacting with new smart contracts simultaneously. A major market crash triggers a wave of liquidations, position closures, and panic selling as DeFi participants scramble to adjust their positions.